In today's ever-more digitalized world, we all have a tale or two to share about how personal computers have let us down: like how they refused to let us run different programs at the same time or how the data was so heavy that the damned device kept us on hold forever before conducting even the most trivial operation.

Well, there is one machine in the world — and it's in Japan — that is absolutely free of such concerns, being the fastest computer on Earth and capable of handling a mind-boggling number of tasks in far less than the blink of an eye.

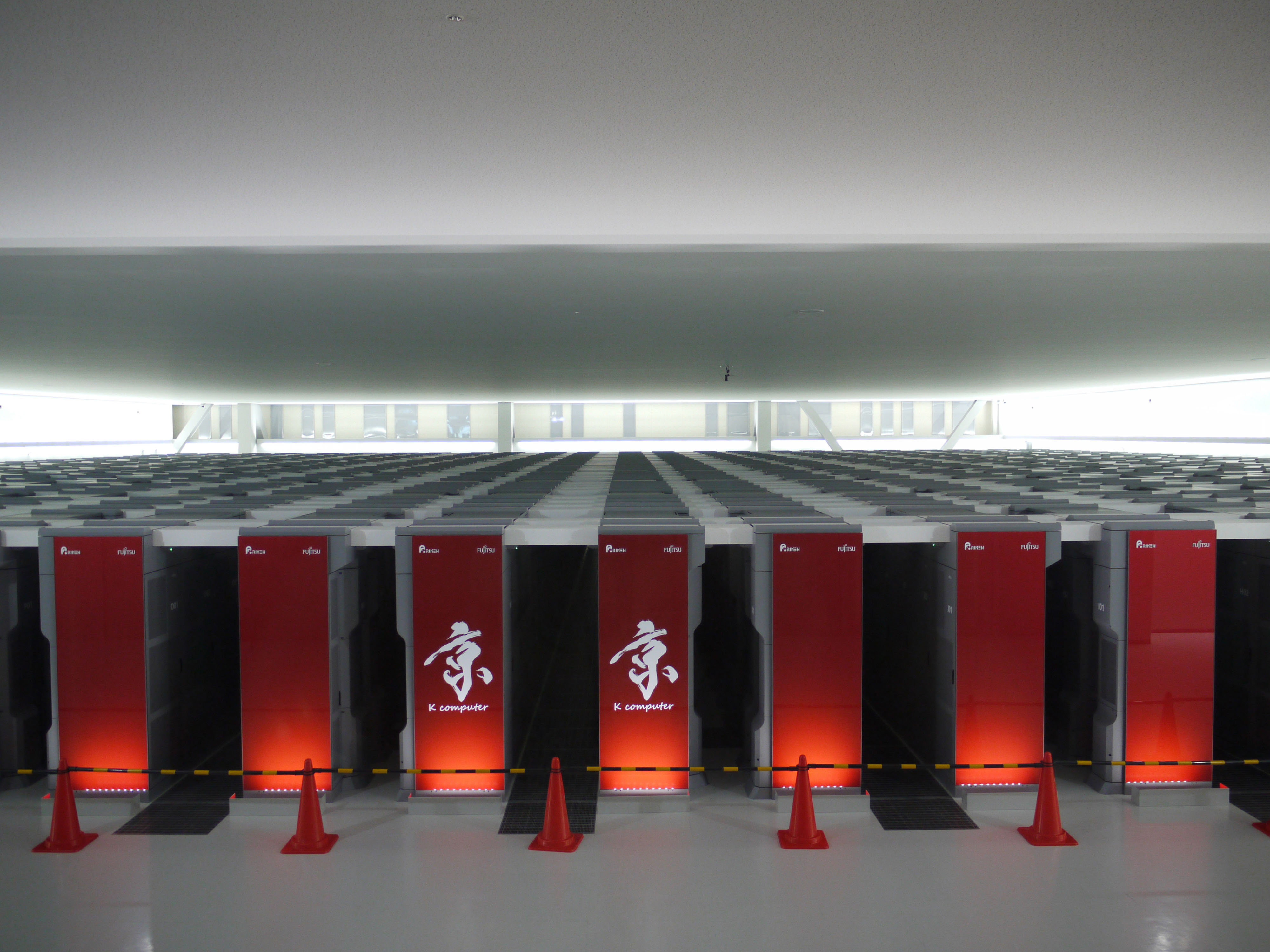

In June and November last year, the K computer — jointly developed by the IT giant Fujitsu and housed at the RIKEN Advanced Institute for Computational Science (AICS) in Kobe — was ranked No. 1 in the TOP500 list of the world's fastest computers. The ranking is announced twice a year at the SC conference of supercomputing experts — also known as the International Conference for High Performance Computing, Networking, Storage, and Analysis.

With your current subscription plan you can comment on stories. However, before writing your first comment, please create a display name in the Profile section of your subscriber account page.