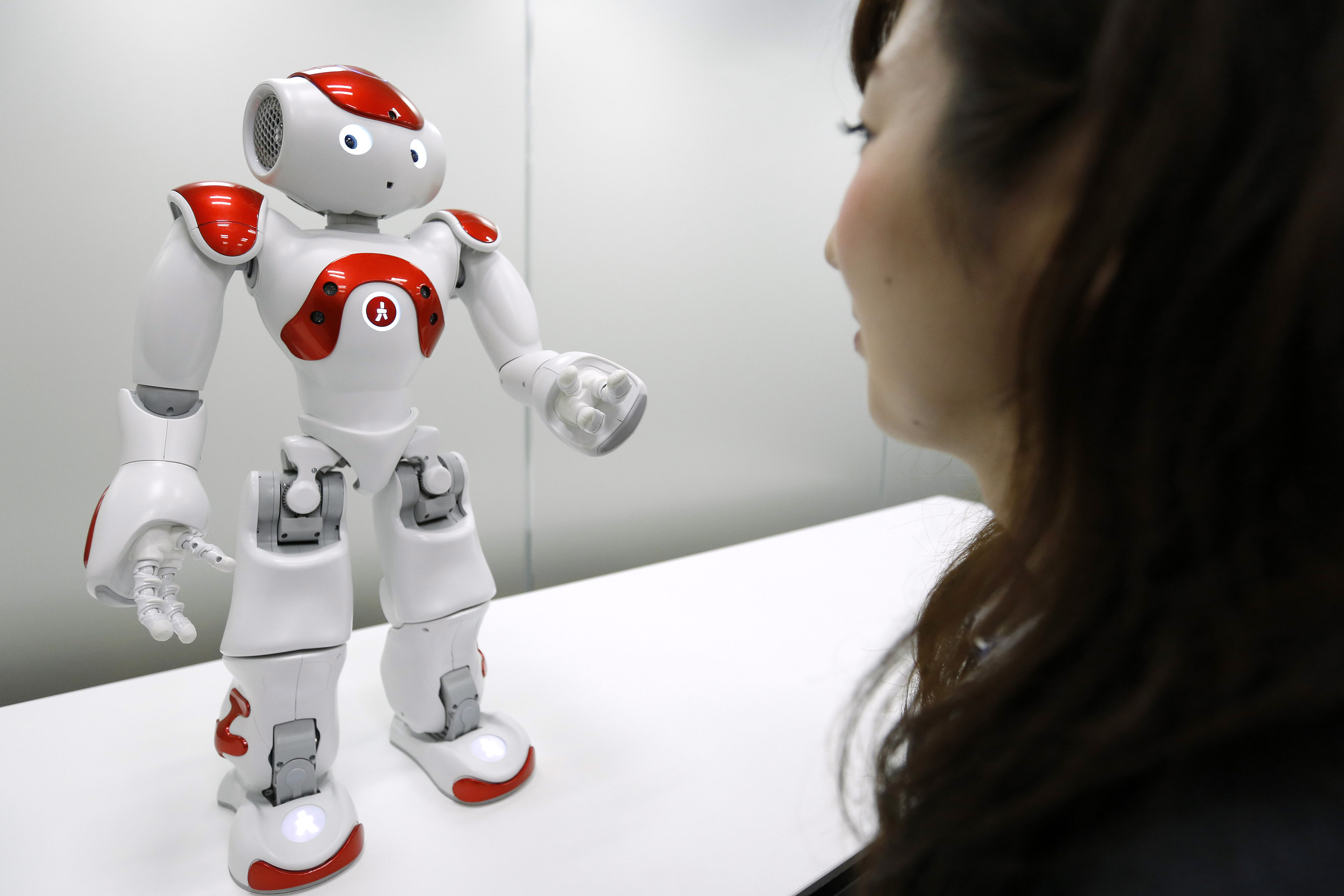

"Hello and welcome. I can tell you about money exchange, ATMs, opening a bank account or overseas remittance. Which one would you like?"

This greeting is not uttered by a human employee but, instead, a diminutive robot named Nao. Just 58 cm tall, the humanoid will be deployed on a trial basis at one or two branches of Mitsubishi UFJ Financial Group Inc. in April to help customers with their enquiries.

It — I'm really struggling not to say "she" — is just one of an increasing number of robots working in customer-service positions in Japan.

With your current subscription plan you can comment on stories. However, before writing your first comment, please create a display name in the Profile section of your subscriber account page.