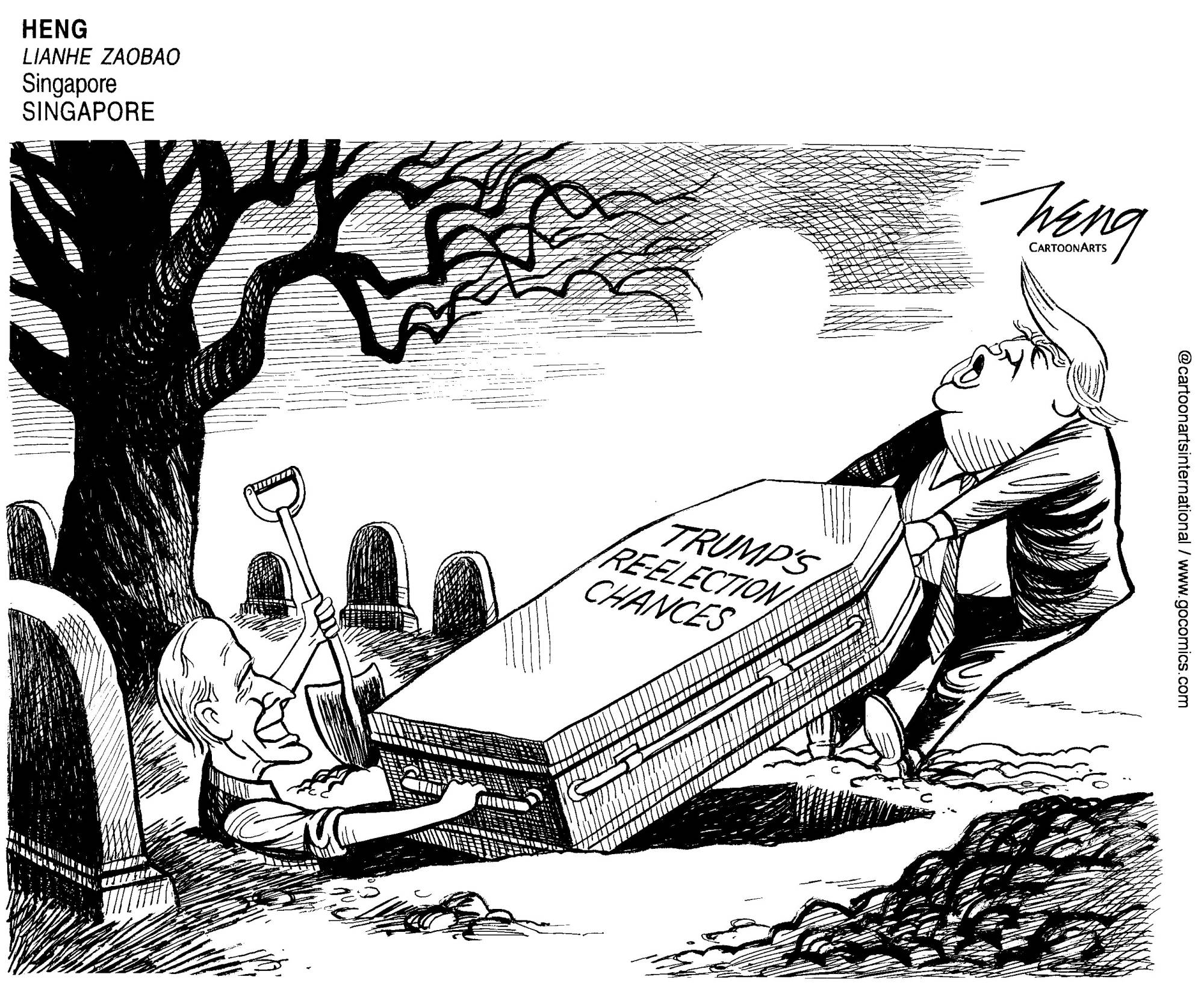

Psychologist Philip Tetlock’s warning that “the average expert is roughly as accurate as a dart-throwing chimpanzee” has assumed Talmudic weight as the world awaits the results of next month’s U.S. presidential election. Donald Trump’s victory in the 2016 vote — after elections guru Nate Silver said he had “only” a 29% chance of winning the electoral college — was widely viewed as a stake through the heart of statistics-led political punditry and fuels a belief among the right and the left that his re-election is imminent.

A more accurate conclusion is that most observers misunderstood and misapplied Silver’s analysis: Silver was saying that in roughly one out of every three elections Trump would win. That failure wasn’t the fault of experts and pundits alone. Rather, it reflects shortcomings that are built into the way we think about the world — and are capable of being fixed (to some degree.)

Given that virtually every decision is to some degree a prediction — “where shall we go for dinner?” makes assumptions about a future experience — it is remarkable just how bad we as a species are at it. Ancient civilizations credited the crazed and addled for insight, or were willing to seek destinies in entrails, excrement or the stars. We’ve advanced considerably in technique — although horoscopes remain popular — but the future remains as unknowable as ever.

With your current subscription plan you can comment on stories. However, before writing your first comment, please create a display name in the Profile section of your subscriber account page.