A new global campaign to persuade nations to ban "killer robots" before they reach the production stage is to be launched in the United Kingdom by a group of academics, pressure groups and Nobel peace prize laureates.

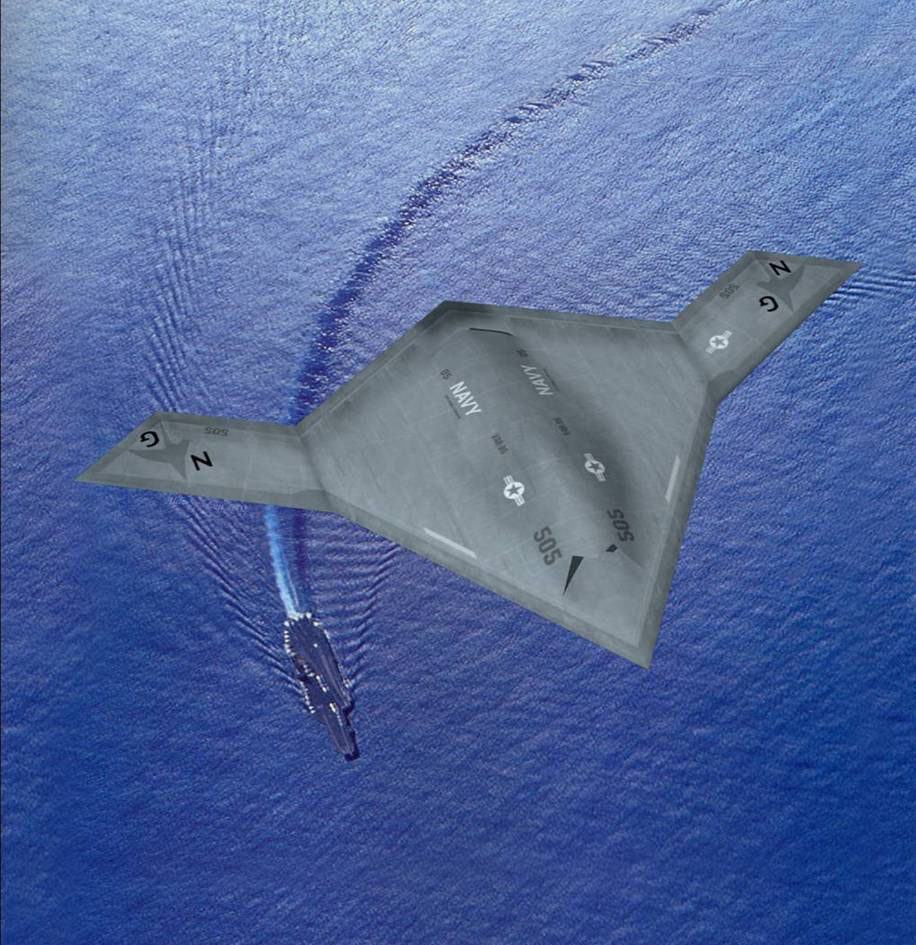

Robot warfare and autonomous weapons, the next step from unmanned drones, are already being worked on by scientists and will be available within the decade, said Dr. Noel Sharkey, a leading robotics and artificial intelligence expert and professor at Sheffield University in northern England. He believes that development of the weapons is taking place in an effectively unregulated environment, with little attention being paid to moral implications and international law.

The Stop the Killer Robots campaign will be launched in April at the House of Commons and includes many of the groups that successfully campaigned to have international action taken against cluster bombs and landmines. They hope to get a similar global treaty against autonomous weapons.

With your current subscription plan you can comment on stories. However, before writing your first comment, please create a display name in the Profile section of your subscriber account page.