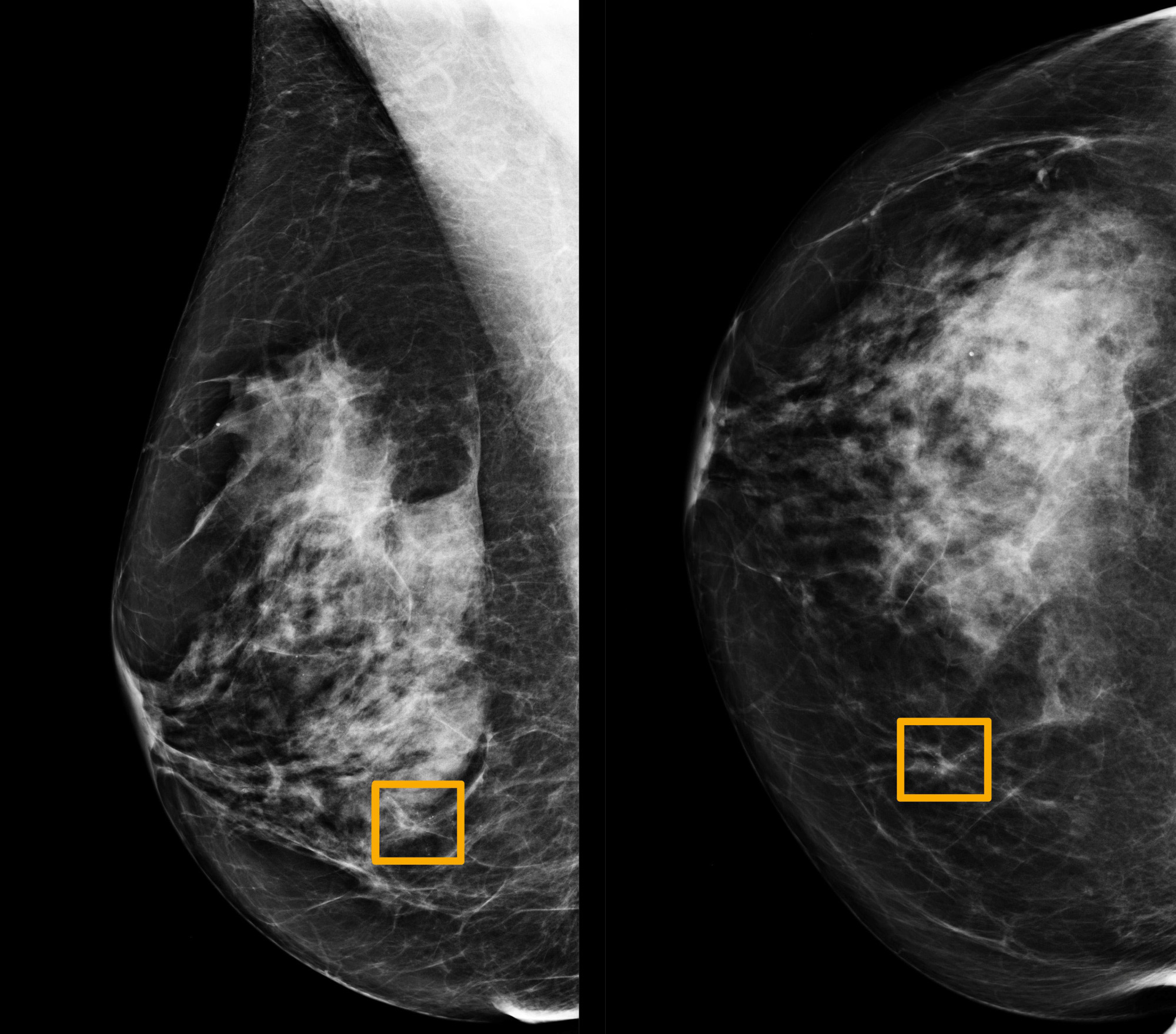

A Google artificial intelligence system has proved as good as expert radiologists at predicting which women would develop breast cancer based on screening mammograms and also showed promise at reducing errors, researchers in the United States and Britain reported.

The study, which was published in the scientific journal Nature on Wednesday, is the latest to show that artificial intelligence has the potential to improve the accuracy of screening for breast cancer, a disease that affects about 1 in every 8 women worldwide.

Radiologists miss about 20 percent of breast cancers in mammograms, the American Cancer Society says, and half of all women who get the screenings over a 10-year period have a false positive result — the screening shows they have breast cancer when actually they don't.